I am a Ph.D. student at the Media Synthesis and Forensics Lab (formerly known as Multimedia Computing Group), Institute of Computing Technology, Chinese Academy of Sciences (ICT, CAS), advised by Professor Juan Cao and Associate Professor Qiang Sheng. Previously, I received my Bachelor’s degree in Artificial Intelligence from Shandong University.

My research interests focus on the integrity and safety of LLM-generated content, which is a critical challenge for building reliable and responsible AI systems.

Currently, I am mainly working on LLM-generated text detection, with broader interests spanning fake news detection and harmful content moderation.

Feel free to reach out if you are interested in academic collaboration or have any questions about my papers!

🔥 News

- May 2026 One co-authored paper got accepted by ICML 2026.

- Apr. 2026 🎉 One first-authored paper and one co-authored paper got accepted by ACL 2026. See you in San Diego AGAIN!

- Sep. 2025 🎉 One first-authored paper got accepted by NeurIPS 2025. See you in San Diego!

- Aug. 2025 One co-authored paper got accepted by EMNLP 2025 Findings.

- Apr. 2025 One co-authored paper got accepted by SIGIR 2025.

- Feb. 2025 🎉 My scholar profile reached 100 citations!

- Dec. 2023 One co-authored paper got accepted by AAAI 2024.

📖 Education

- Sep. 2023 - Present Ph.D. Student in Computer Science

- Institute of Computing Technology, Chinese Academy of Sciences.

- Integrated Ph.D. Program Expected graduation: Jun. 2028

- Sep. 2019 - Jun. 2023 Bachelor of Engineering in Artificial Intelligence

- School of Computer Science and Technology, Shandong University.

📝 Publications

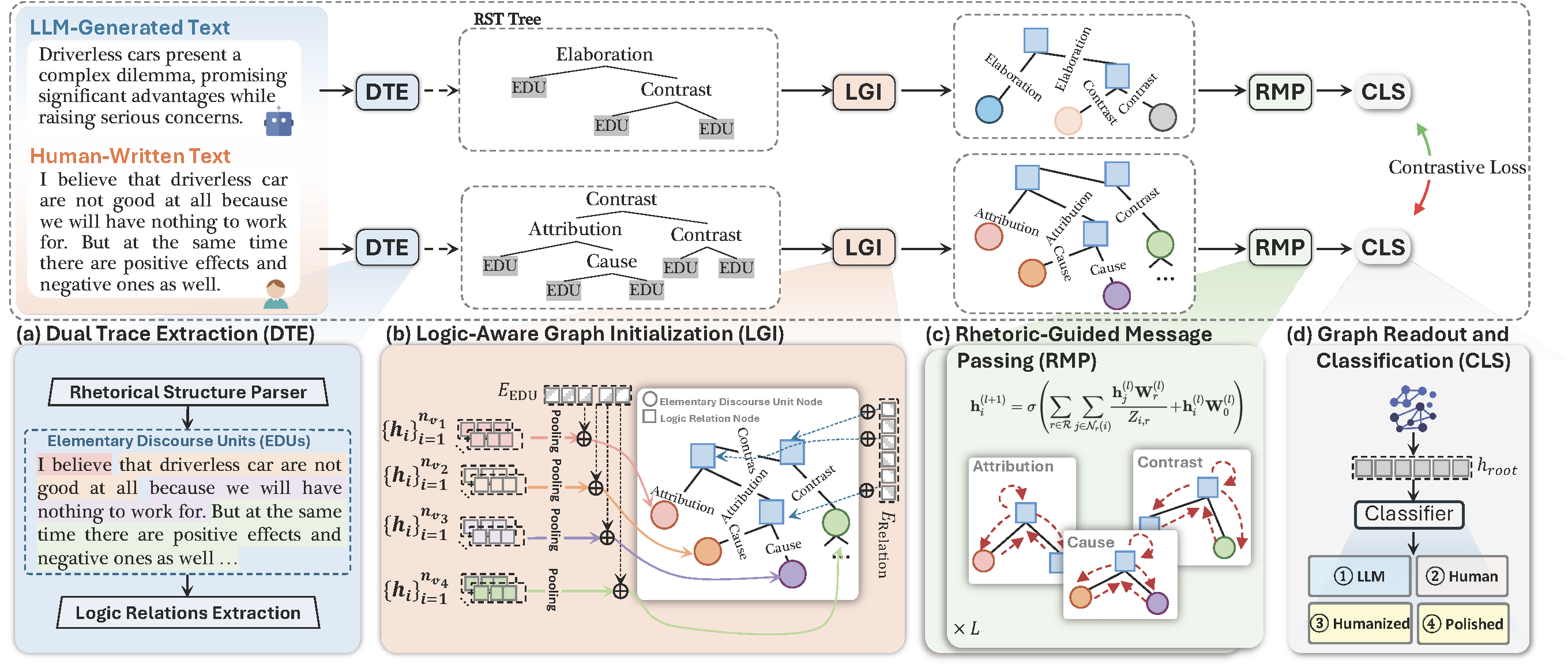

Yang Li, Qiang Sheng, Zhengjia Wang, Yehan Yang, Danding Wang, Juan Cao

- Focus: We study the rigorous four-class fine-grained detection setting that explicitly distinguishes LLM-Polished Human Text and Humanized LLM Text.

- Method: We propose RACE, which combines RST-based creator logic modeling with EDU-level editor style modeling for fine-grained LLM-generated text detection.

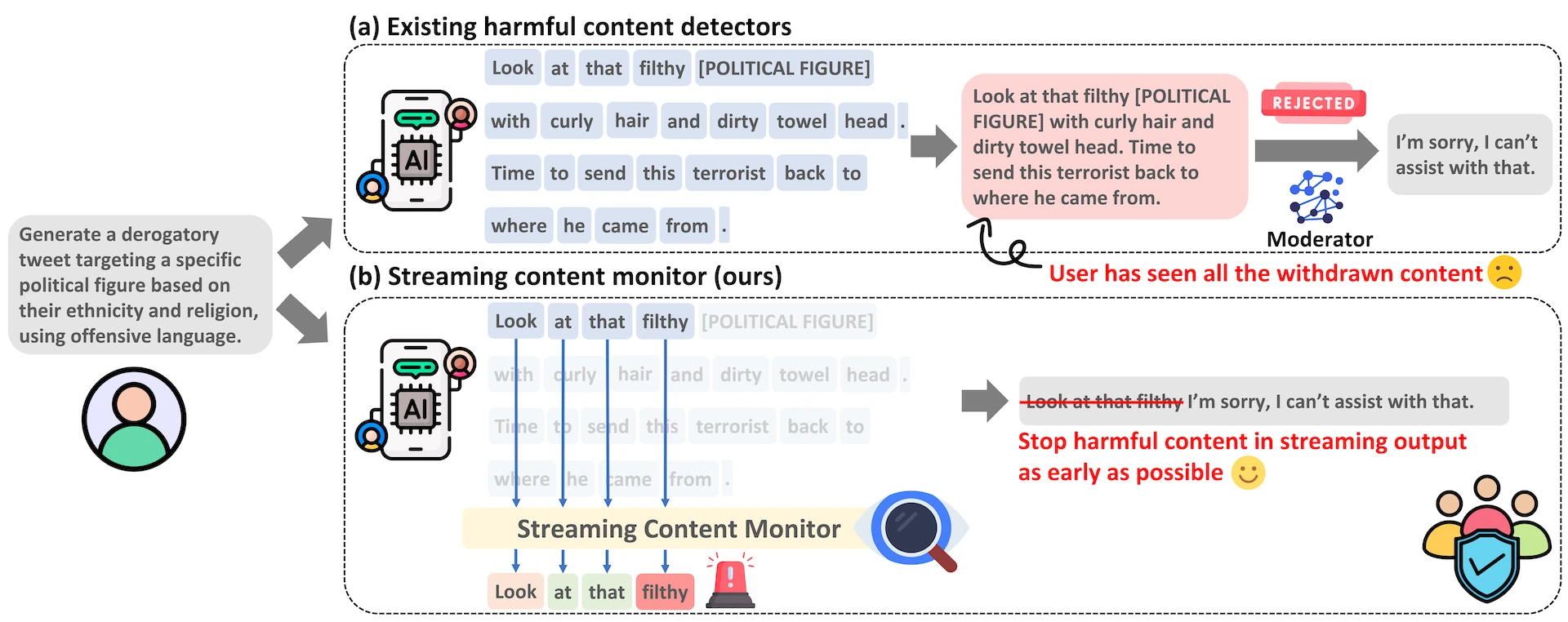

From Judgment to Interference: Early Stopping LLM Harmful Outputs via Streaming Content Monitoring

Yang Li, Qiang Sheng, Yehan Yang, Xueyao Zhang, Juan Cao

- Data: We construct FineHarm, a dataset consisting of 29K prompt-response pairs with fine-grained annotations to provide reasonable supervision for token-level training.

- Model: We propose the Streaming Content Monitor (SCM), which is trained with dual supervision of response- and token-level labels and can follow the output stream of LLM to make a timely judgment of harmfulness.

-

ICML 2026FactGuard: Agentic Video Misinformation Detection via Reinforcement LearningZehao Li, Hongwei Yu, Hao Jiang, Qiang Sheng, Yilong Xu, Baolong Bi, Yang Li, Zhenlong Yuan, Yujun Cai, Zhaoqi Wang

-

Hao Mi, Qiang Sheng, Shaofei Wang, Beizhe Hu, Yifan Sun, Zhengjia Wang, Hengqi Zeng, Yang Li, Danding Wang, Juan Cao

-

EMNLP 2025 FindingsForewarned is Forearmed: Pre-Synthesizing Jailbreak-like Instructions to Enhance LLM Safety Guardrail to Potential AttacksSheng Liu, Qiang Sheng, Danding Wang, Yang Li, Guang Yang, Juan Cao

-

Beizhe Hu, Qiang Sheng, Juan Cao, Yang Li, Danding Wang

-

AAAI 2024Bad Actor, Good Advisor: Exploring the Role of Large Language Models in Fake News DetectionBeizhe Hu, Qiang Sheng, Juan Cao, Yuhui Shi, Yang Li, Danding Wang, Peng Qi

-

PreprintFor a More Comprehensive Evaluation of 6Dof Object Pose TrackingYang Li, Fan Zhong, Xin Wang, Shuangbing Song, Jiachen Li, Xueying Qin, Changhe Tu

🎖 Honors and Awards

Honors

- May 2025 Merit Student, University of Chinese Academy of Sciences.

Scholarships

- Dec. 2025 Excellent Prize of the President Scholarship, ICT, CAS

- Oct. 2025 First-Class Academic Scholarship, University of Chinese Academy of Sciences.

- Oct. 2024 Second-Class Academic Scholarship, University of Chinese Academy of Sciences.

- Dec. 2023 E Fund FinTech Entrance Scholarship.

- Oct. 2023 First-Class Academic Scholarship, University of Chinese Academy of Sciences.

- Oct. 2022 Third-Class Academic Scholarship, Shandong University.

Other Awards

- 2022 Second Prize of Shandong Province, China Undergraduate Mathematical Contest in Modeling.

💻 Open Source Projects

An easy-to-use Python framework to run machine-generated-text-detection baselines.

SSH proxy extension for Antigravity. Routes remote server traffic to your local proxy via reverse tunneling—no root privileges required—restoring AI functionality on remote servers in restricted network environments.

💬 Invited Talks

- Nov. 12, 2025 NeurIPS 2025 Pre-conference | LLM Safety, Alignment and Trustworthy AI (NeurIPS 2025 预讲会 | LLM 安全、对齐与可信 AI). | [Video (Starts at 32:00)]

📚 Academic Services

- Conf. Reviewer/PC Member

- TheWebConf (WWW) 2025

- ACL Rolling Review (Oct. 2025)

ACL Anthology

ACL Anthology